Particle Physics and Artificial Intelligence

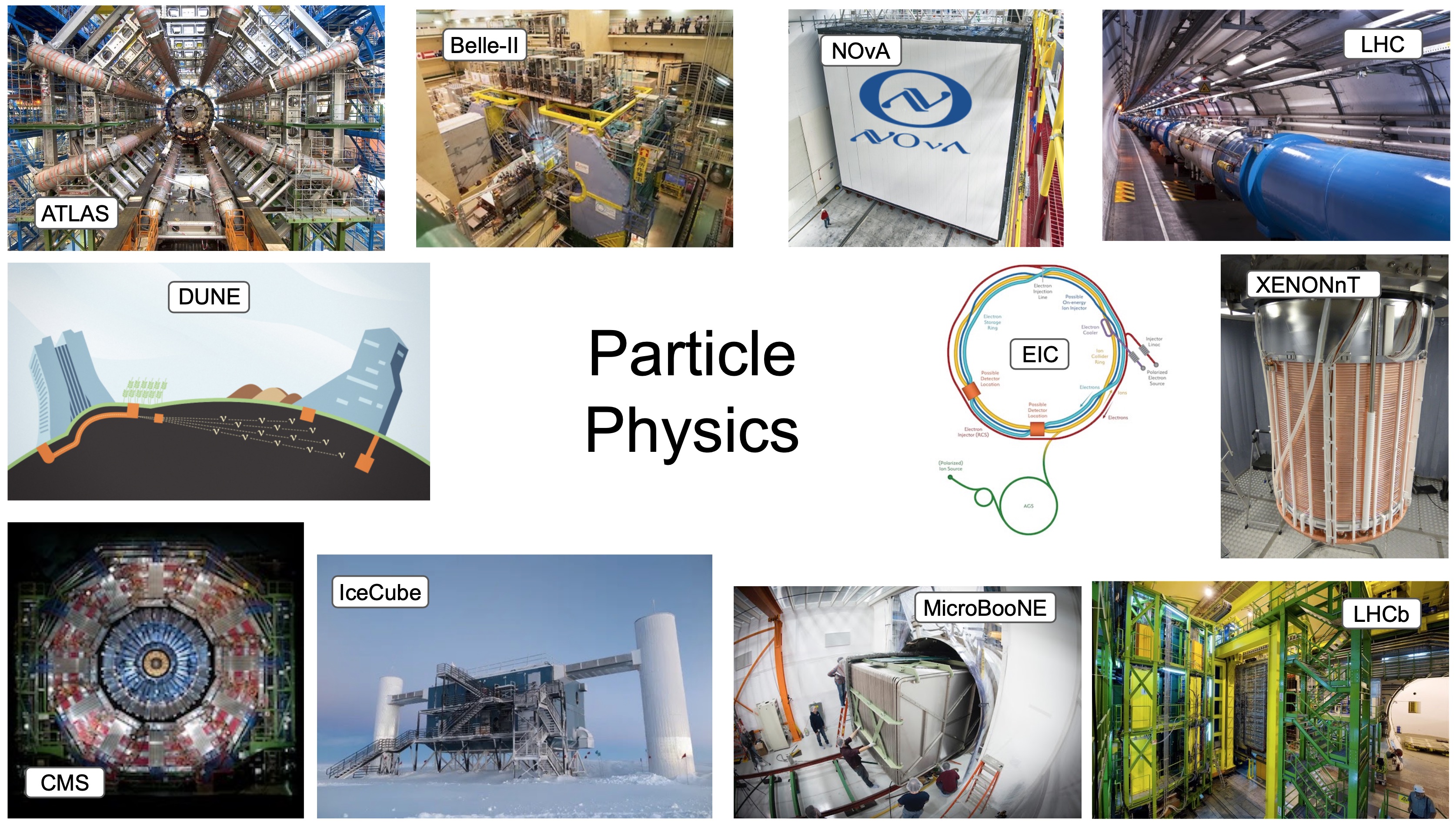

Experimental particle physics addresses some of the most fundamental questions about the universe through facilities that are among the largest, most complex, and ambitious scientific endeavors ever constructed. Across collider, neutrino, cosmic, and rare-event experiments, these facilities function as massive and continuous data generators, producing petabytes of rich, structured, curated data annually, while discarding a majority of the raw information due to bandwidth, storage, or latency constraints. The scale, complexity, and structure of these datasets align with the strengths of modern Artificial Intelligence (AI): high-dimensional pattern recognition, rare-signal inference, low-latency decision making, and the orchestration of complex systems spanning hardware, software, and human expertise. AI can play a transformative role by enabling experiments to extract and retain more information from data, extending the discovery potential, and reducing the time from data-taking to discovery. It can also improve the efficiency and sustainability of long-running facilities and increase sensitivity to subtle or unexpected phenomena. Now is a pivotal moment: experiments currently in operation or under construction will define the scientific output of particle physics for the next several decades, while unprecedented national investments in AI, advanced computing, and workforce development create a rare opportunity to couple our scientific challenges with foundational AI research. This recent whitepaper presents our community vision and an actionable plan to seize this moment.

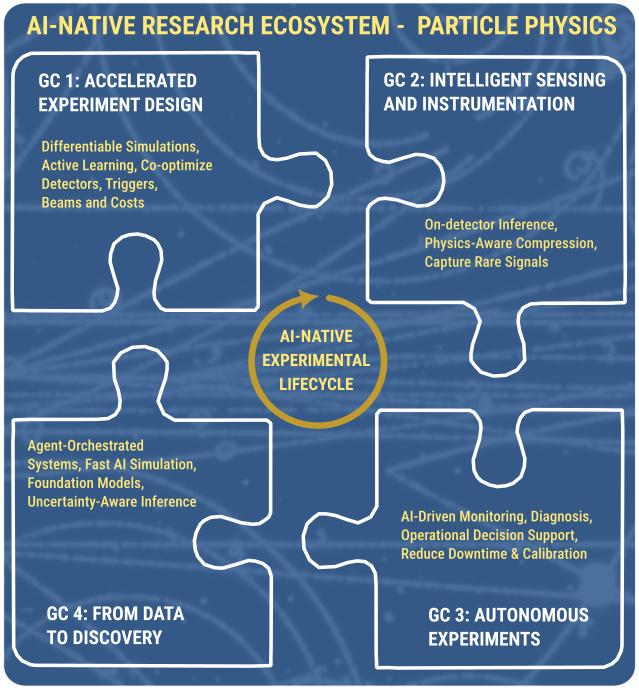

Our vision is to embed AI end-to-end across the experimental lifecycle, from the co-design of accelerators and detectors to intelligent sensing, data acquisition, autonomous operations and cali- bration, and accelerated analysis for discovery. In this “AI-Native” paradigm, experiments become continuously learning systems: design and opera- tions are optimized over time, more information is retained and understood, and scientists spend less time on mechanical steps and more on scientific interpretation - directly advancing the national goal of dramatically increasing research output and discovery. This approach also maximizes the return on current investments and enables next- generation facilities to be conceived and operated with AI as a core capability.

Grand Challenges: This vision is organized around four Grand Challenges that form a self-reinforcing engine for an AI-Native ecosystem. Accelerated Experimental Design uses differentiable, agent-guided optimization to co-design accelerators, and detectors so that ambitious ideas become buildable, higher-impact experiments on faster timescales with reduced technical risk and costs. Intelligent Sensing & Instrumentation moves intelligence upstream via trigger-less or AI-assisted readout, physics-aware compression, and real-time inference to preserve rare, unexpected, or time-critical signals while operating within bandwidth and storage constraints. Autonomous Experiments transforms labor-intensive, reactive operations into proactive, resilient, and continuously calibrated systems, capturing more high-quality data with less downtime and preserving institutional knowledge over decades-long experiment lifetimes. From Data to Discovery integrates foundation models, fast AI-enabled reconstruction and simulation, and agent-orchestrated workflows to compress analysis cycles by orders of magnitude and open new regions of theory space to exploration. Advancing any one challenge amplifies the others, expanding scientific reach and productivity while positioning the U.S. as a global leader in AI-powered particle physics.